Lossfunk

We're a lab based in Bangalore, India focused on foundational questions on artificial and biological intelligences. Read our charter to learn more.

Programs

- Research Fellowship (for pre-doc and post-docs): 1 year full time fellowship based out of Bangalore

- Research Internship (for students): 3 to 6 months internship for undergraduate and graduate students interested in AI research

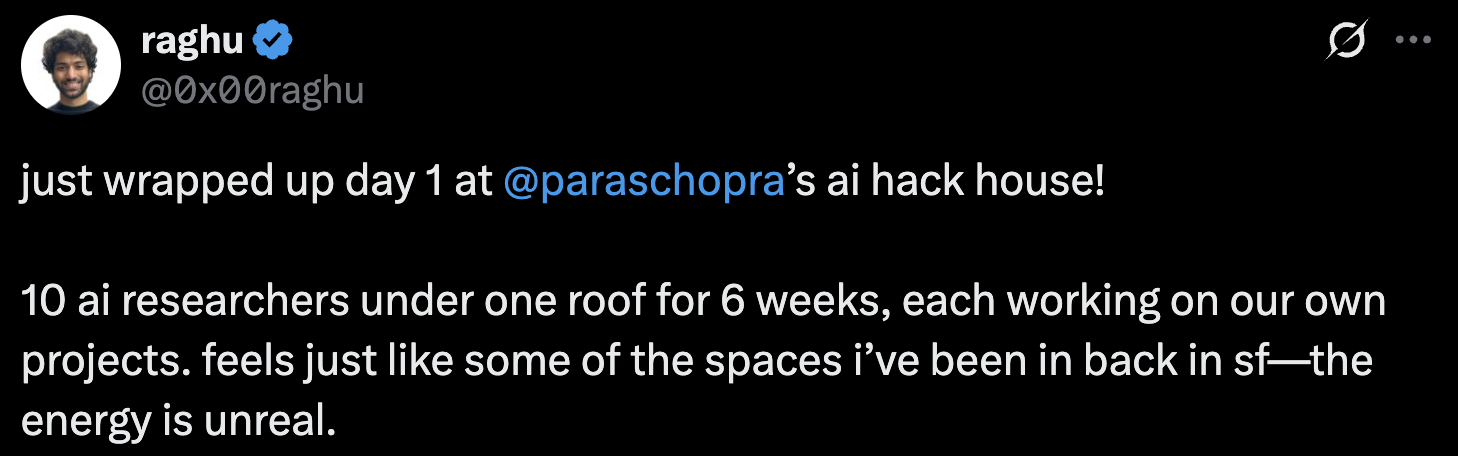

- Residency (for curious minds): a 6 week, on-site, curiosity-led exploration into AI and related topics

Lab's focus

We define intelligent systems as those that achieve their goals successfully, even in situations that they haven’t encountered before. At Lossfunk, we want to study how intelligence manifests in biological systems and then use that inspiration to build artificial systems that go beyond where current AIs are.

This manifests for us in (broadly) three directions:

- AI that adapts to a domain: continual learning, memory, test time adaptation, active learning, sample efficiency, efficient training or inference, personalization, curiosity, exploration, agency, autonomy, OOD generalization, curriculum learning, meta-learning, uncertainty modeling

- Creativity in artificial systems: novelty, creativity, representations, data manifold, extrapolation, surprise, world models, recombination, concept modeling, scientific theory building, innovation, abstractions, program synthesis, knowledge representation, taste

- Biological foundations of intelligence: consciousness, predictive processing, evolution, multi-agents, artificial life, language (origins), developmental cognition, neuroscience, representations, anthropology

Check out this document for a detailed description of our research focus areas.

Papers

- Consciousness • AI Consciousness Requires Validated Models of Human Consciousness [AAAI 2026 Symposium on Machine Consciousness]

- World Models • See, Symbolize, Act: Grounding VLMs with Spatial Representations for Better Gameplay [LM Reasoning Workshop at AAAI, 2026] • (website, code)

- LLMs • EsoLang-Bench: Evaluating Genuine Reasoning in Large Language Models via Esoteric Programming Languages [Logical Reasoning and ICBINB workshops at ICLR, 2026] • (website, code, dataset)

- LLMs • Making Large Language Models Speak Tulu: Structured Prompting for an Extremely Low-Resource Language Tulu [LoResLM Workshop at EACL, 2026] • (code)

- Agents • Automated Stateful Specialization for Adaptive Agent Systems [⭐ ICLR main conference, 2026]

- Benchmarks • ISO-Bench: Can Coding Agents Optimize Real-World Inference Workloads? [VerifAI-2 Workshop at ICLR, 2026] • (website, code, dataset)

- Bias in AI • Language Models Entangle Language and Culture [Language Models for Underserved Communities Workshop at AAAI, 2026] • (website, code, dataset)

- Ethics • Building Interpretable Models for Moral Decision-Making [Machine Ethics Workshop at AAAI, 2026] • (code)

- AI for science • METIS: Mentoring Engine for Thoughtful Inquiry & Solutions [AI for Scientific Research Workshop at AAAI, 2026] • (code)

- AI for science • Why LLMs Aren't Scientists Yet: Lessons from Four Autonomous Research Attempts [Arxiv preprint, 2026] (website, ai written paper, prompts)

- LLMs • Future Is Unevenly Distributed: Forecasting Ability of LLMs Depends on What We're Asking [Assessing and Improving Reliability of Foundation Models Workshop at AAAI, 2026]

- LLMs • The Sequential Edge: Inverse-Entropy Voting Beats Parallel Self-Consistency at Matched Compute [Efficient Reasoning Workshop at NeurIPS, 2025]

- LLMs • Think Just Enough: Sequence-Level Entropy as a Confidence Signal for LLM Reasoning [Foundations of Reasoning Models Workshop at NeurIPS, 2025]

- LLMs • IPO: Your Language Model is Secretly a Preference Classifier [⭐ ACL main conference, 2025] • (code)

Releasing soon

- Philosophy • Metaphysical Concepts Should Be Judged by Consequences [preprint out soon]

- RL • Discovering Reinforcement Learning Interfaces with Large Language Models [preprint out soon]

To get notified when we post preprints of our papers, join our Whatsapp community (we post ~1x/week).

Talks

We regularly host talks on AI and related topics at our office in Bangalore and online.

You can access our past talks on our YouTube channel

To get notified about upcoming talks, join our Whatsapp community (we post ~1x/week).

Links

About

Hi! I'm Paras, founder of Lossfunk. I'm a newly-minted AI researcher [my Google Scholar profile] and a builder [my GitHub profile].

Previously, I founded Wingify, bootstrapped it to $50mn ARR and then exited to a private equity group.

-[--->+<]>---.+[----->+++<]>.----.+++.+[--->+<]>++.-[---->+<]>++.---[->++++<]>.-----.[--->+<]>-----.++++++[->++<]>.+[--->+<]>.++++..-------------.-[--->+<]>--.-------.---.[->+++<]>--.

Stay curious :)

.

.

.

.

.

.

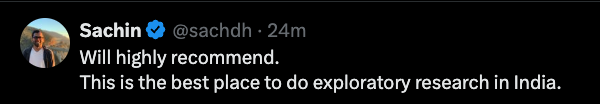

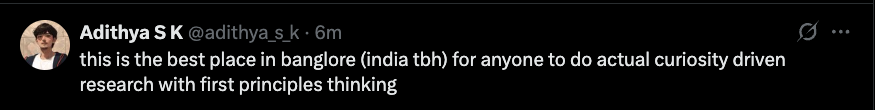

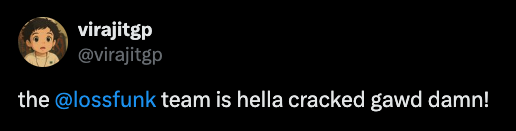

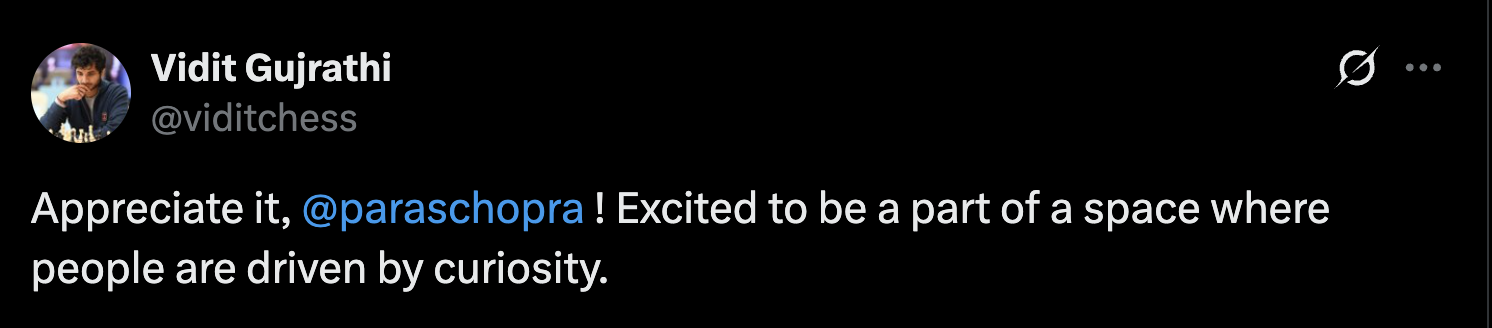

Still there? Here's a wall of testimonials

^^^ World #25 player in chess